This has now come up twice this week, so I thought I'd float it. The use of function evaluation at points to determine the correctness of student answers is, I've found, generally very good. But it has the undesirable side-effect that an inexact student answer may be marked correct or incorrect on different submissions.

Consider the problem snippet:

$a = random(1,9); BEGIN_TEXT \(f(x) = $a - e^x\) $BR Find the equation of the tangent to \(f\) where it crosses the \(x\) axis: $BR \(y = \) \{ ans_rule(20) \} END_TEXT ANS( Compute("-$a*(x - ln($a))")->cmp() );

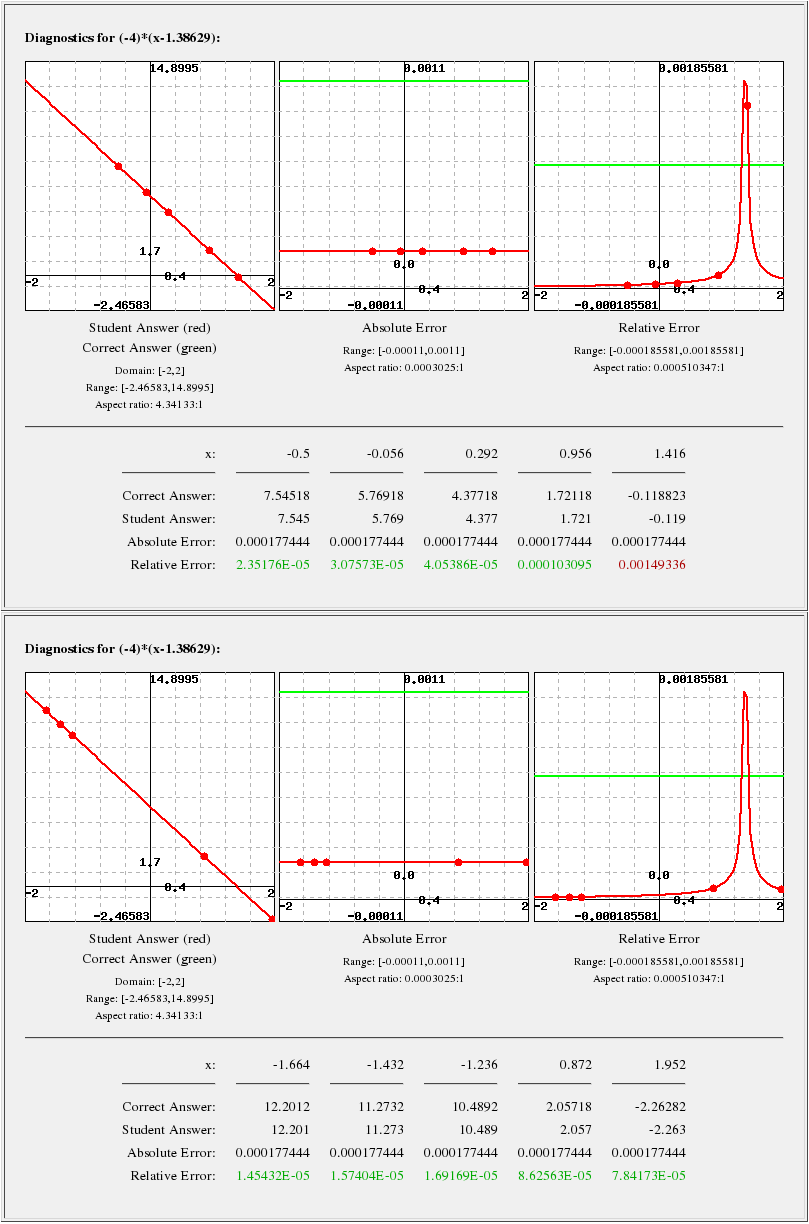

If $a=4, then the correct answer is y = -4x + 4ln(4), or approximately y = -4x + 5.54518. We had a student enter y = -4x + 5.545; on one of six attempts (there are other parts to the problem) one of the evaluation points occurred close to x = 1.38625, where the student's answer evaluates to zero, with the result that the error relative to the (non-zero) correct answer was greater than the allowed tolerance, and the answer was marked wrong.

The tricky thing here is that the singularity is a function of the student's answer, so it's not entirely straightforward to avoid it when answer checking. This isn't completely correct, of course: if we avoid zeros in the correct solution, we avoid approximate zeros in the student's solution.

So: is this the best work-around? (That is, when the correct solution has a zero, be careful to check the answer away from it?) Is there a better one? Can or should the answer checking be modified to avoid students' answers being marked differently on different submissions?

I think this last issue: that a student's answer can be marked differently on subsequent submissions, is quite significant.

Thanks,

Gavin